Why Engineers Don’t Trust AI (And What That Means for Your Visibility)

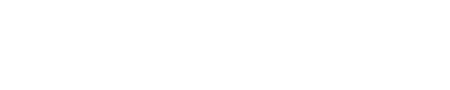

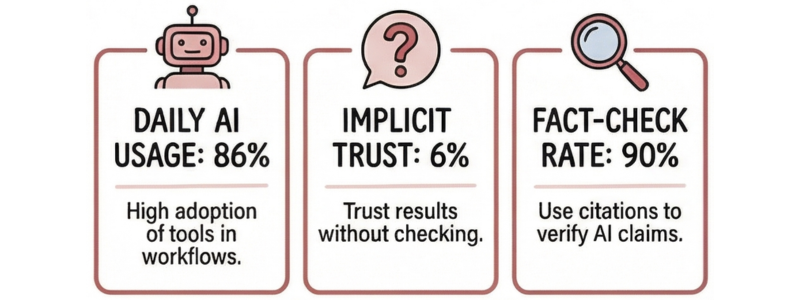

Eighty-six percent of US engineers use AI tools in their daily work. Only 6% trust the results without checking them first. Nearly nine in ten verify AI outputs before acting on them: running back-of-the-envelope calculations, pulling datasheets, cross-referencing textbooks and industry manuals (Omni Calculator, 2026).

Massive adoption. Near-zero trust.

Most marketing and GTM leaders in the electronics industry read this data and see a problem. AI is answering engineering questions before they reach a vendor website. Traffic is declining. The traditional path from search to content to sales is fragmenting.

But there is another reading of the same data. Engineers who do not trust AI outputs do not ignore AI. They use it to get to a shortlist, then they verify. What they verify against is what they trust. The companies whose technical content is accurate, structured, and consistently cited by AI systems are not being bypassed. They are being tested. And they are being found reliable or filtered out before a sales conversation ever begins.

That is the visibility opportunity most electronics manufacturers have not yet recognized.

86% of engineers use AI tools in their daily work. Only 6% trust the results without checking.

source: Omni Calculator, 2026

Why Adoption and Trust Are Moving in Opposite Directions

AI adoption among engineers accelerated sharply over the last year. Avnet’s 2026 Insights Survey of 1,200 engineers globally found that 56% are now shipping products with AI incorporated into their designs, up from 42% the year before. The tools are embedded in daily workflows: component research, simulation, documentation, design review.

But trust has not moved with adoption. The average trust level among technical buyers fell from 6.5 in 2024 to 4.4 in 2025 (TREW Marketing / GlobalSpec State of Marketing to Engineers). Accuracy and reliability are the primary concern for 85% of engineers surveyed. Only 9% believe AI improves the accuracy of their work. The other 91% see it as a time-saver, not a truth-teller.

This distinction matters. Engineers are trained to verify. It is not a habit or a preference. It is built into the discipline. When AI surfaces an answer, an engineer’s instinct is to check it. Not because they distrust technology broadly, but because their professional responsibility requires it. A wrong component recommendation in a power design does not produce a revised spreadsheet. It produces a failed board, a redesign cycle, and a delayed program.

The result is a two-step sequence that most B2B content strategies have not yet accounted for: AI surfaces the candidate; engineering judgment makes the call. Understanding that sequence changes how you think about visibility entirely.

What Engineers Do When They Do Not Fully Trust the Answer

When AI surfaces a result, engineers verify it. Fifty-two percent run a manual back-of-the-envelope calculation. Twenty-nine percent check textbooks or industry standards. They look for a second source. One that is authoritative, technically precise, and written by someone who understands the engineering context, not just the marketing angle (Omni Calculator, 2026).

This verification behavior has a direct and measurable consequence for content strategy. Seventy-three percent of B2B buyers now use AI tools like ChatGPT or Perplexity in their research process. Of those, 90% click through to sources cited by AI when they want to fact-check a result (Averi, 2026). That click is not casual browsing. It is deliberate verification. The engineer arrived at your content because an AI system cited it, and they needed to confirm whether it held up under scrutiny.

If your content holds up: specific application data, accurate parametric information, clear operating conditions, honest trade-off analysis, you become the trusted source. If it does not, vague product claims, outdated specs, and content optimized for search rather than for understanding will get you filtered out at precisely the moment the engineer was forming a design preference.

This is not a traffic story. It is a credibility story. The question is not how many people land on your page. It is whether the engineers who land there leave with more confidence in your product or expertise than they arrived with.

The companies winning in AI-mediated search are not the ones publishing the most. They are the ones whose content holds up when an engineer checks it. That is a completely different content strategy.

Sannah Vinding

What “Trusted Source” Actually Means in an AI-Mediated Market

Engineers looking to verify an AI result are not searching for content that ranked well on a keyword. They are searching for content that answers the specific question they have with enough technical depth that they can test it against their own judgment.

Forty-seven percent of engineers in the Omni Calculator 2026 survey said they would prefer an LLM trained by engineers with stronger evaluation, provenance, and documentation over a publicly available model. Only 16% said they prefer a public LLM for technical questions. That preference is a signal. Engineers are not looking for faster answers. They are looking for trustworthy answers. And in a technical context, trustworthiness means: is this grounded in real engineering knowledge, can I trace where it came from, and would another engineer agree with it?

Content that meets that bar shares a consistent set of characteristics. It is specific: it addresses a real design decision, not a generic concept. It cites sources and acknowledges the conditions under which a recommendation applies. It does not oversell. It is written by someone who clearly understands failure modes, not just the ideal case. And it uses the language engineers actually use when they are thinking through a problem, not the language a marketing team uses when describing a product.

This is precisely what AI systems learn to cite and what engineers learn to trust. Domain authority in AI-mediated search is not built through link volume. It is built through technical credibility that survives scrutiny. Research on AI citation patterns shows that a page with structured, specific, and accurate technical data can be cited ahead of pages with far more backlinks, when the content’s relevance and precision match what the AI system is evaluating against (Averi.ai, 2026).

For electronics manufacturers, this is a meaningful shift. The companies investing in technically precise application content, accurate parametric data, and engineering-grounded use cases are building a citation advantage that compounds over time. The companies producing high-volume, generically optimized content are building the opposite.

What Leaders Must Do Next

Three decisions matter now for electronics companies navigating this shift.

The first is to stop treating technical content as a marketing support function and start treating it as commercial infrastructure. The engineer who discovers your application note through an AI citation and finds it accurate is forming a design preference before any sales conversation begins. Sixty percent of the B2B buying process happens online before engineers engage with sales (TREW Marketing / GlobalSpec, 2025). The content layer is already doing selling work. The question is whether it is doing it well.

The second is to audit existing content for engineering credibility, not just search performance. The right question is not “does this rank?” It is “would an engineer trust this enough to verify a decision against it?” Application notes, parametric data organized around real use cases, technical comparisons that acknowledge trade-offs, and content that is explicit about the conditions under which a product performs. These are the types that survive verification. Generic product pages that lead with features and stop at specifications do not.

The third is to structure content for AI citation as well as human reading. Structured data, clear definitions, specific statistics with named sources, and content organized around the questions engineers actually ask in design reviews. These are the signals AI systems use to decide what to cite. The manufacturers that earn consistent citation in AI-mediated technical search are not winning on publishing frequency. They are winning on precision and engineering credibility.

Trust is the Gatekeeper

Engineers are not afraid of AI. They are applying the same rigor to AI outputs that they apply to everything else in their discipline: verify before you trust. The companies that become the reliable reference point in that verification step will hold the most influence in the design-in process over the next several years.

The trust gap is not a problem to solve. It is a position to earn.

Sannah Vinding

Engineer and Go-To-Market Leader

I’m an engineer and go-to-market leader with global experience across electronics and semiconductor businesses. I work at the intersection of product, engineering, and marketing, translating technical detail into clear positioning, usable content, and GTM systems that teams actually use. My focus is on practical execution, product clarity, and applying AI where it removes friction rather than adding noise.

If this resonated, read these next

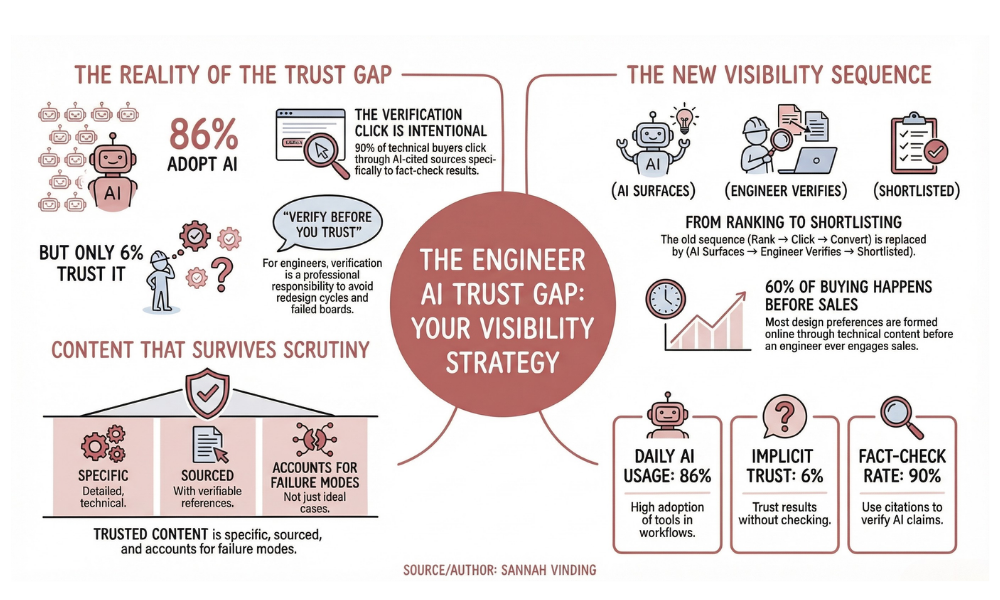

Stop Being a Content Factory: Your Marketing Team Needs a Reboot

Marketing in electronics is no longer about content volume. Learn why Product Marketing must map claims to proof and why machine-readable data now determines visibility with engineers and AI.

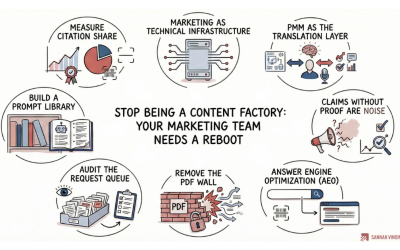

Stop Copying SaaS Playbooks: The Engineering-Driven GTM Operating System for 2026

Why SaaS go-to-market models fail in electronics and what engineering-driven teams must build instead. A practical GTM operating model for AI-driven discovery, supply chain volatility, and trust in 2026.

Follow for engineering-driven insight on AI, go-to-market strategy, and B2B growth in complex technical industries.